-png.png)

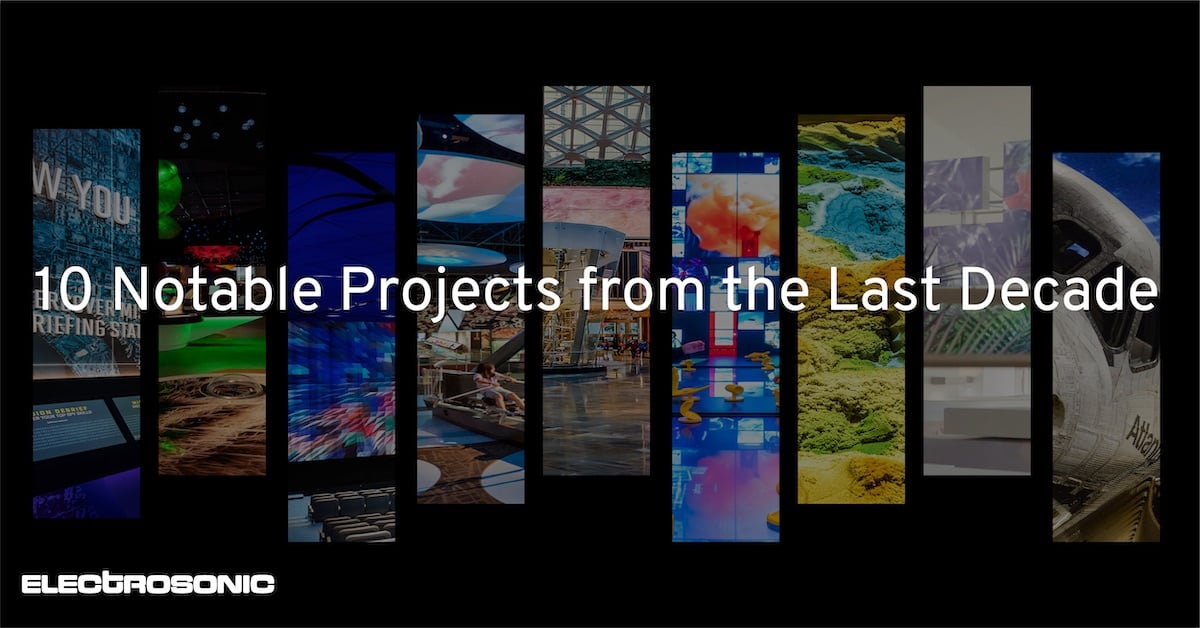

How to Use AI to Augment Your Creative Potential

Artificial intelligence (AI) isn’t the stuff of science fiction anymore. In fact, it’s powering billions of processes and workflows for companies worldwide. It’s become a key component in almost every industry, including audiovisual (AV). There’s a new application of its technology’s computer vision and image generation, described as visual AI.

Those involved in the creative development for AV immersive and experiential design, along with the venues that want to create these, can now use AI to augment creative potential. Leveraging visual AI could become a critical component of your next big project, so you’ll want to understand what it is, how it works and how it will transform creative.

What Is Visual AI?

Visual AI combines computer vision and image generation. These two aspects of AI technology can enable some exciting things. Computer vision describes connecting a camera to a computer running an AI model that can “see” things. This has many familiar uses cases, including:

- The ability to identify an object, which a self-driving car may use to detect a red light ahead.

- Person detection, such as a doorbell camera’s capabilities.

- Facial detection used by people counters.

- Facial recognition, which perceives a face and recognizes whom it is based on a database of known people for security applications.

Other types of computer vision often work together to serve a specific function.

Image generation is the second part of visual AI. AI receives a prompt. It could be manual in the case of DALL-E or Midjourney, which create images from textual descriptions. There are also automated examples, such as Minecraft or No Man’s Sky, which generate 2D or 3D imagery based on the prompt and AI model.

By combining computer vision and image generation, opportunities to augment creative become possible. So, how does visual AI create these images, exactly?

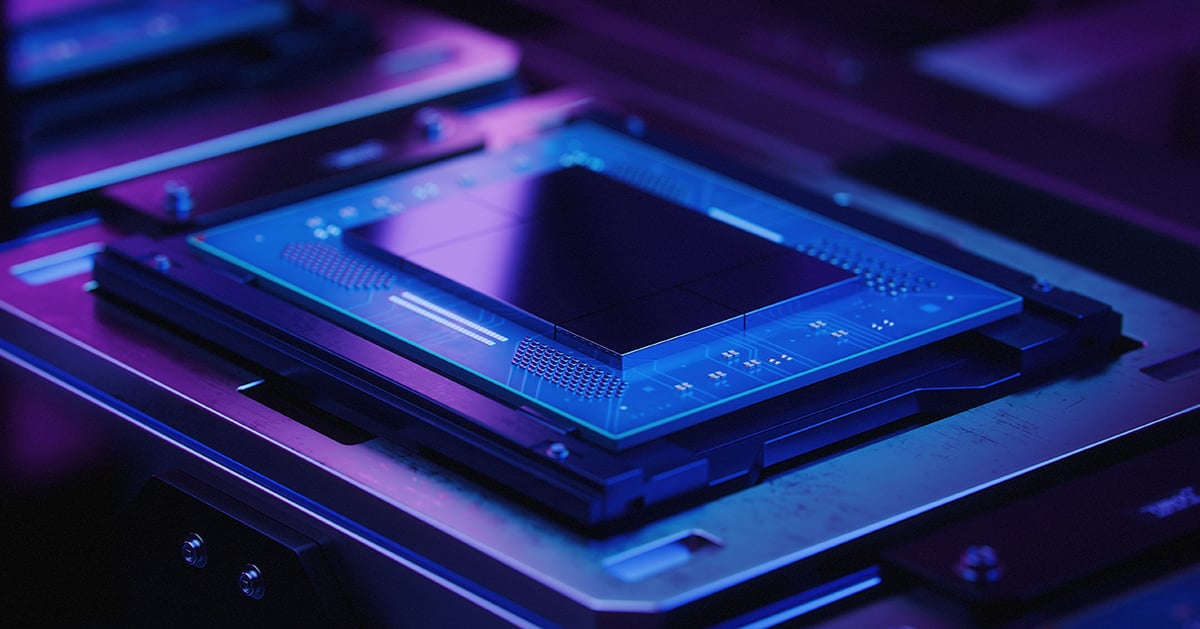

Visual AI and AI Models

Visual AI predominantly relies upon AI models to perform the function of image generation. AI models come to fruition through “training” the AI with data. The more data it absorbs, the more accurate it becomes.

For example, AI models have become crucial in healthcare diagnosis for conditions like skin cancer. The AI model ingests a library of photos that include actual skin cancer and others that are not. As the model matures, clinicians then ask it to discern if a single image is or isn’t skin cancer. It would then go back through its library to make the most informed decision.

The foundation of visual AI is modeling, and content creators in the experiential design space have lots of content to feed them. Thus, it’s a natural connection to apply this technology to the industry. It can be a non-human assistant to content creators.

-jpg.jpeg)

Visual AI Could Be Your New Colleague

Visual AI has some major points that make it an excellent way to augment your current process. It can create and identify imagery much faster than humans. Its accuracy is high on its own and even better with human help. Finally, it’s relatively accessible through new tools. This human-computer interaction could be the next horizon of content.

The expectations for this emerging technology are that it will become one more tool—possibly the smartest—in your tech stack to push content creation forward. Next, let’s review the possible applications.

Visual AI Applications

There are two opportunities regarding how you can apply visual AI—self-generative content and computer vision for personalization.

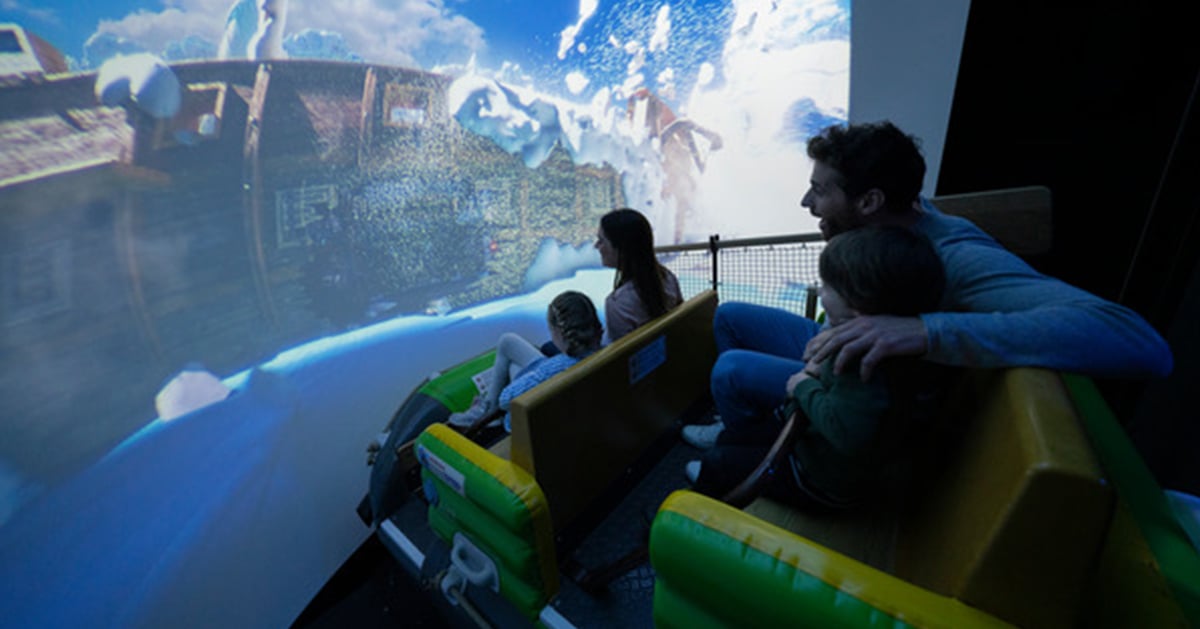

Self-Generative Content

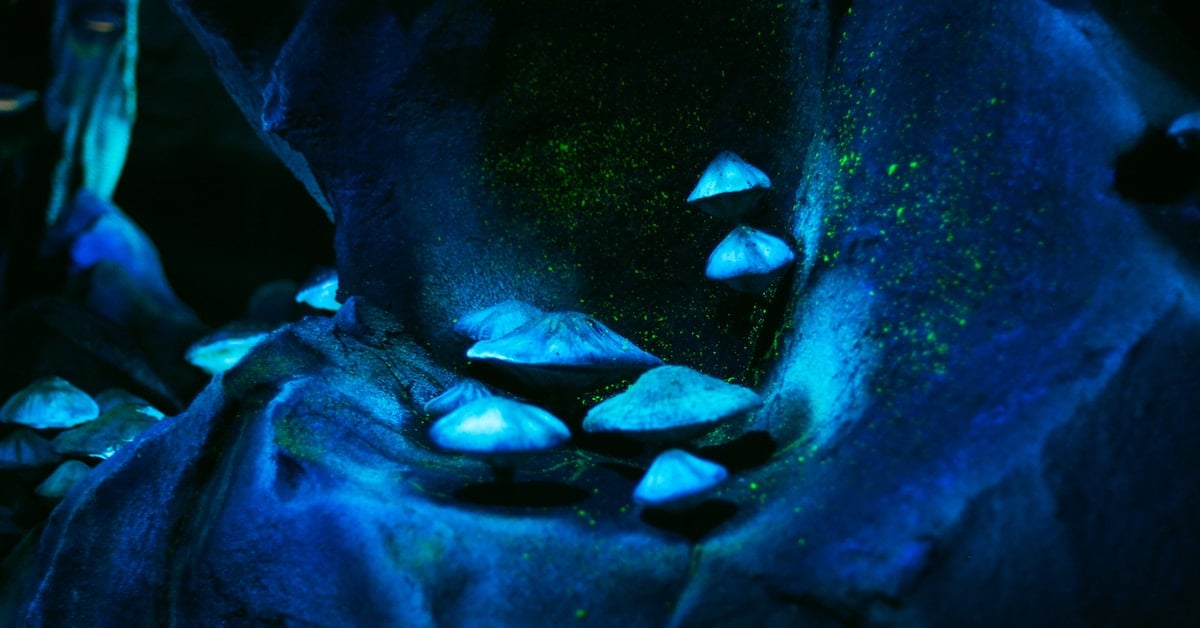

In this scenario, the AI is the content creator. Based on the prompts it receives, it produces images, videos and virtual environments. With this in place, the quantity of creative you generate could increase immensely. It could be ideal for the parts of the design that are less complex, so creatives could focus on those pieces.

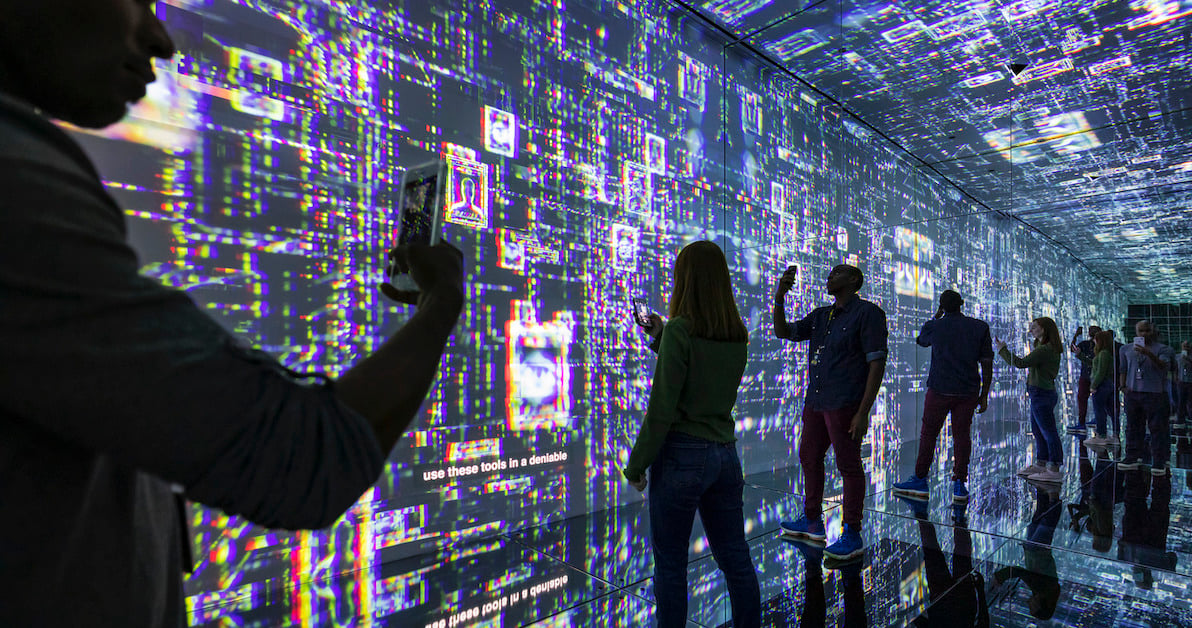

Computer Vision for Personalization

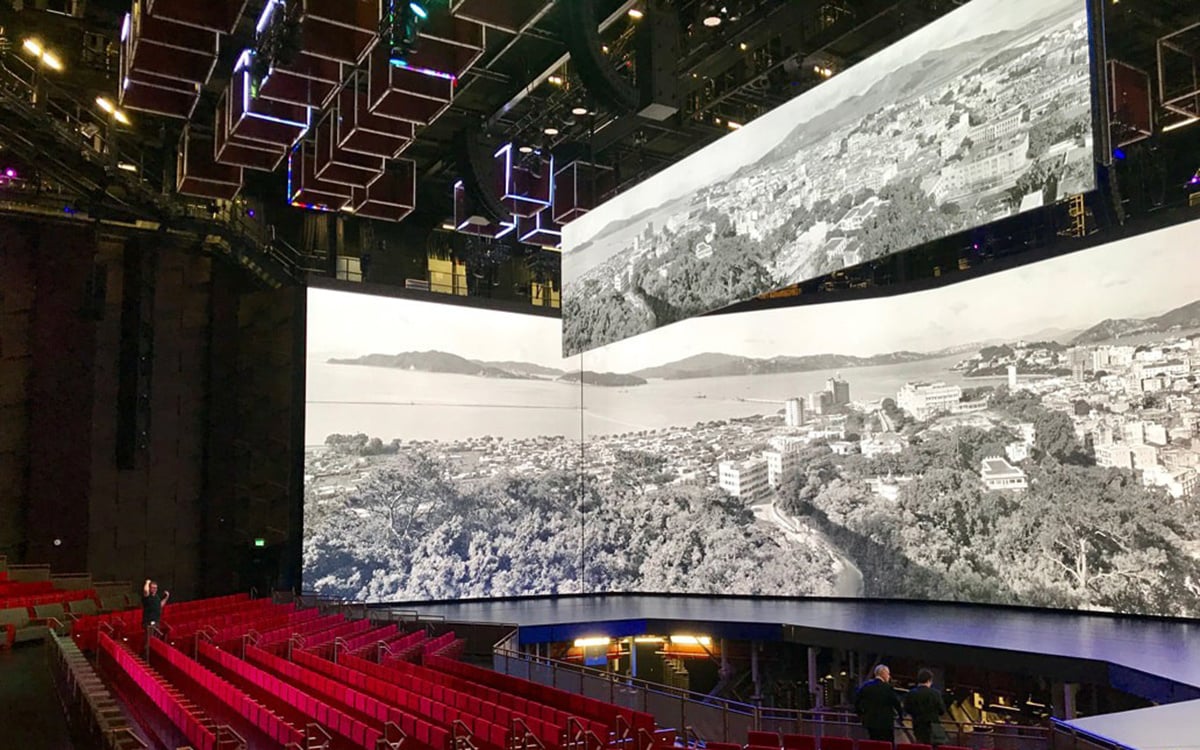

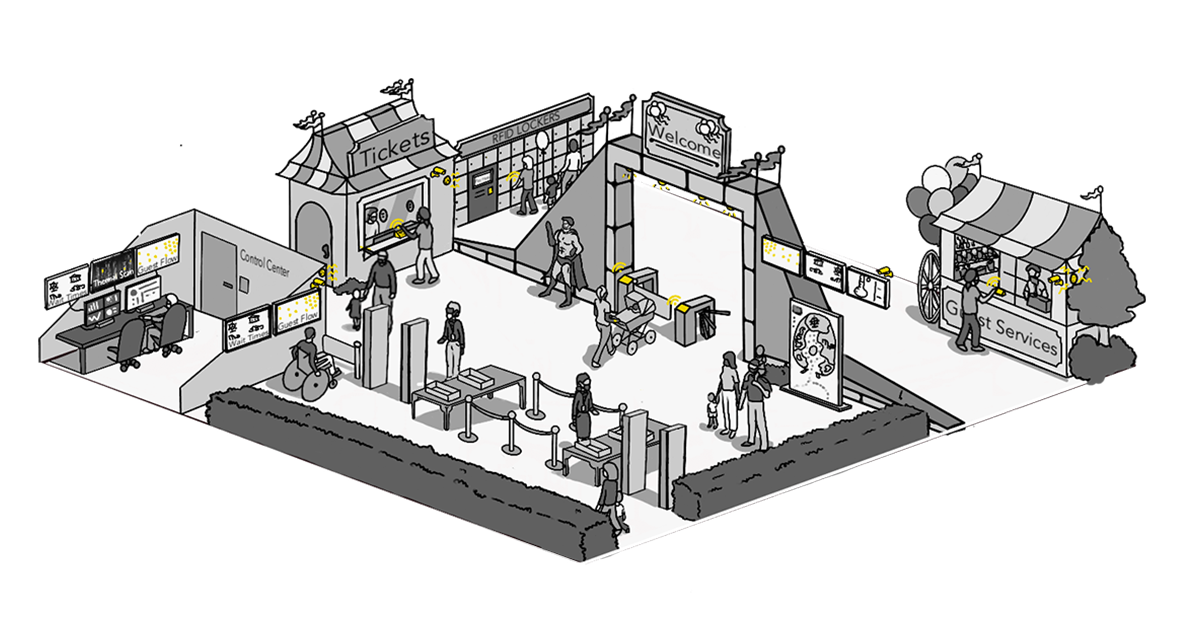

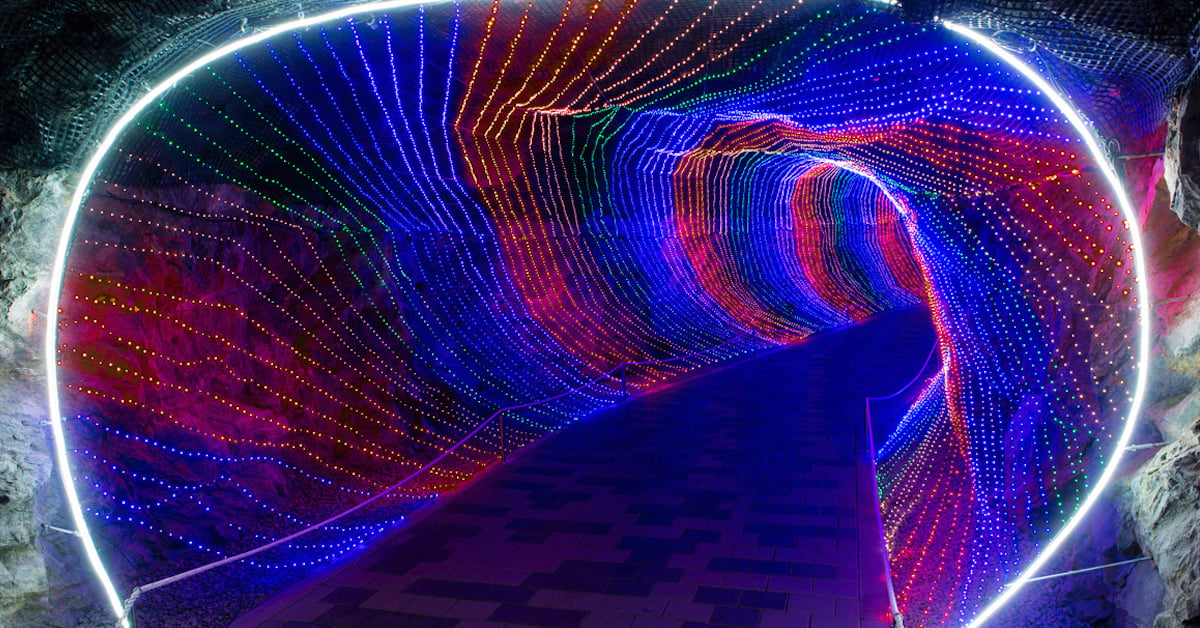

In this application, the AI acts as a trigger via either demographics or facial recognition. When it detects these things, the individual could encounter unique experiences just for them. In terms of technology-rich immersive design, personalization has been both an opportunity and a challenge. How do you customize this in a large venue on a one-to-one basis?

Visual AI may be the bridge to this. It elevates the immersion and could work in concert with other trigger technology (e.g., LIDAR), delivering truly one-of-a-kind moments. The excitement and anticipation of such times would be a huge draw for visitors.

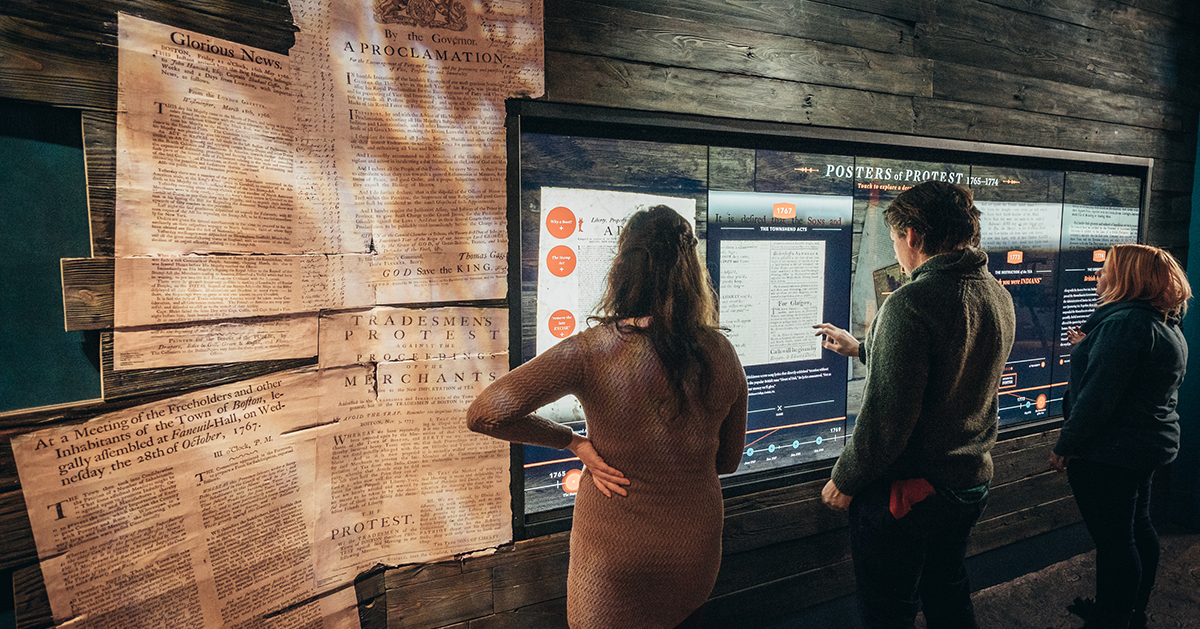

Another aspect of this use case could include the recognition of facial expressions and emotional gestures. Research is underway on this, and it looks beyond the familiar feelings of happiness or sadness, detecting things like boredom or confusion. That could be helpful in experiential design. For example, if the AI identified boredom, which isn’t the kind of emotion you’d hope for while they’re at your venue, specific content could appear to try to shift that into enjoyment.

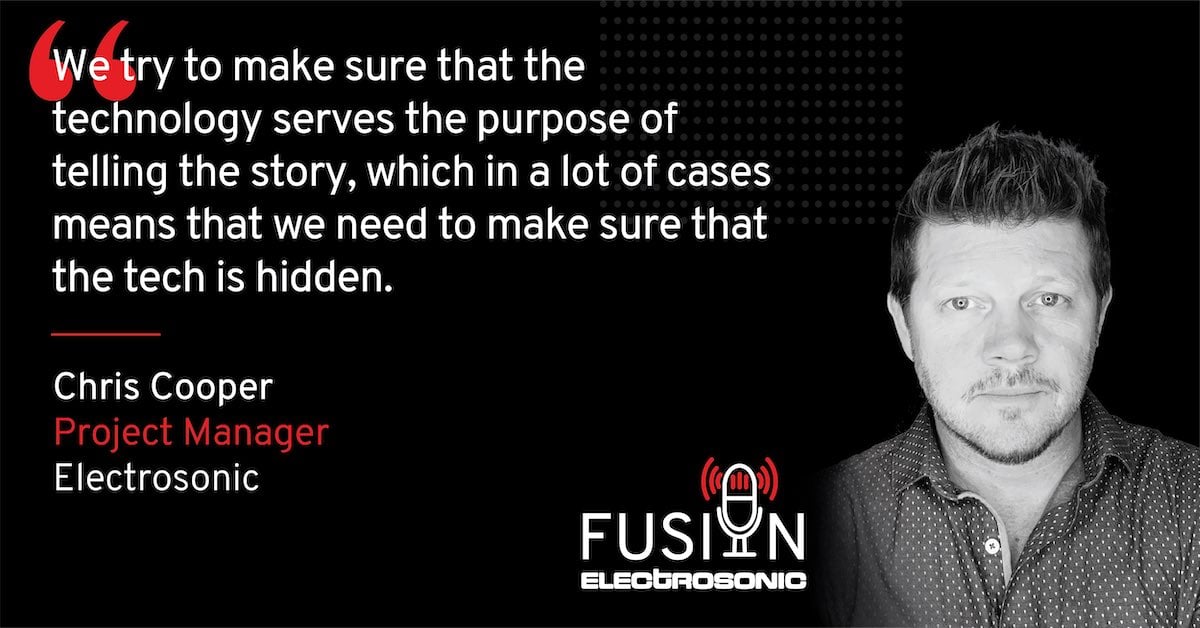

In both cases, the AI doesn’t replace you or your team of creatives. It simply offers an assist. The dire warnings about AI supplanting humans aren’t accurate in general or in this situation. A good analogy is IBM’s Deep Blue. It outmatched the chess champion, Garry Kasparov. It wasn’t the end of people playing chess. Rather, players began to leverage AI to train and improve their skills. The same thing is possible with visual AI; the more it trains and the more complete the prompts are, the better the content will be.

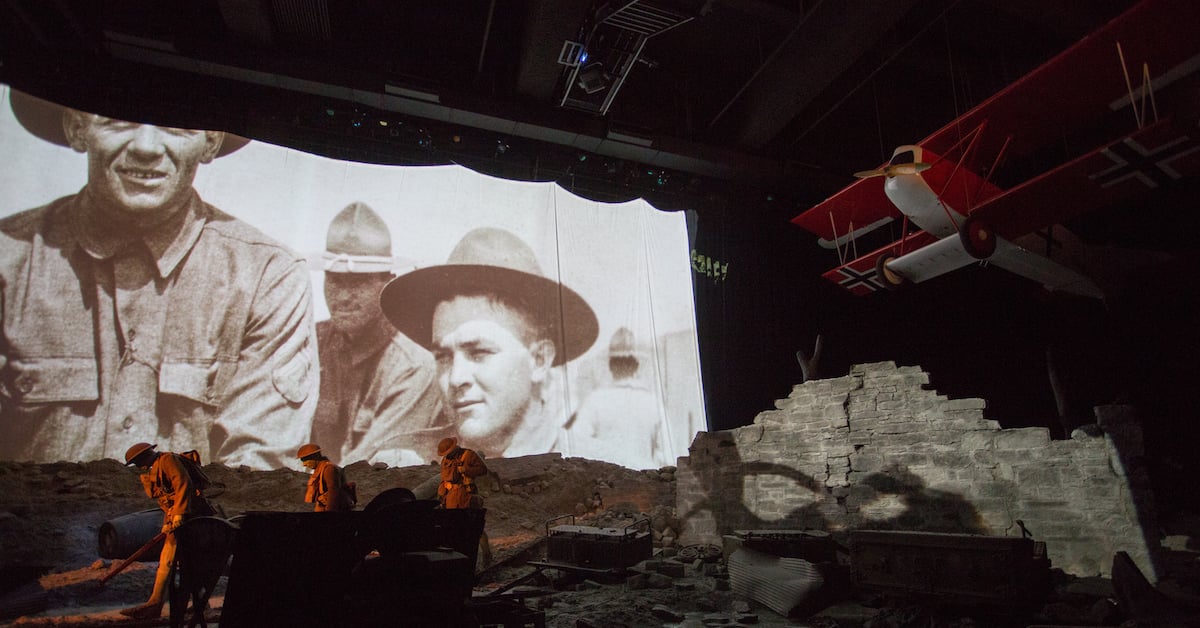

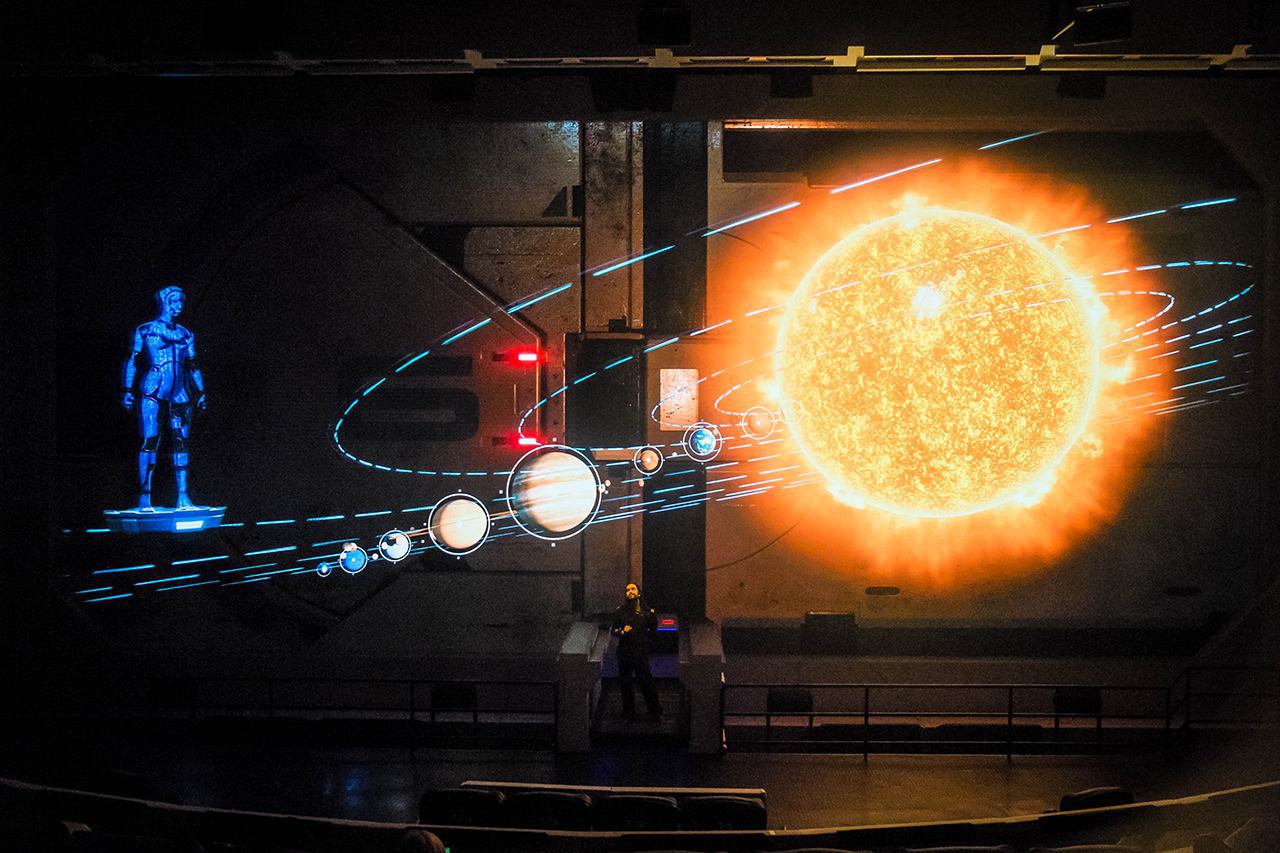

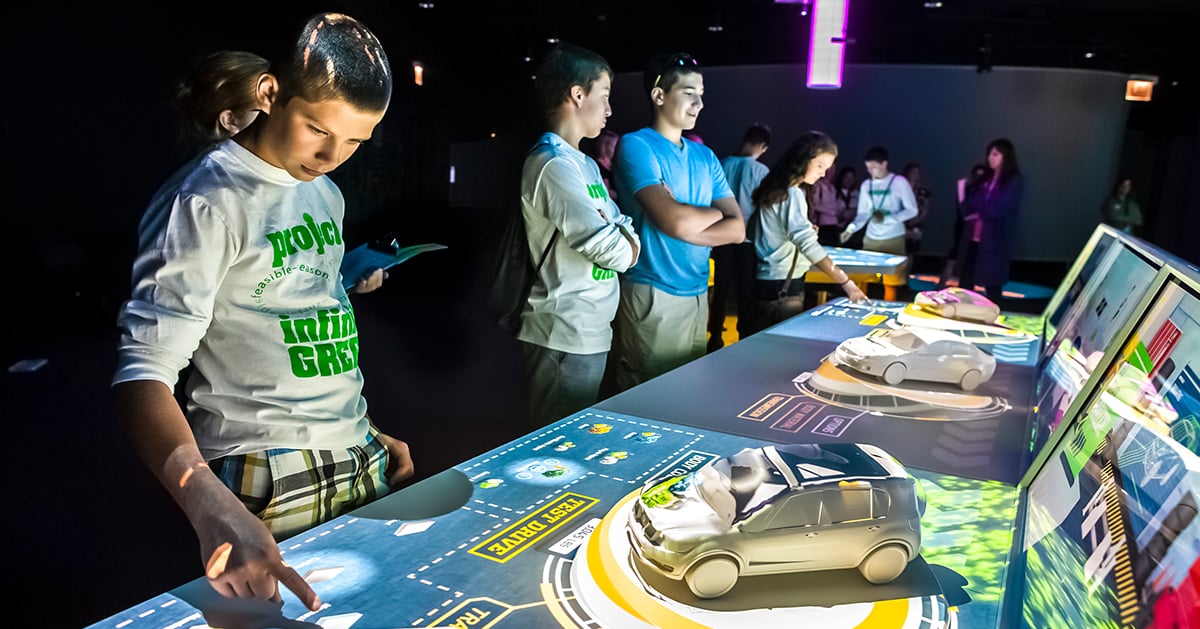

Multidisciplinary studios like Float4 and RealMotion already use this technology to integrate digital experiences into physical spaces. Most are just at the tip of what the technology can do. It could be producing complex videos or creating virtual worlds in the future. It’s an exciting time to imagine what it will be able to do and empower creators with new tools.

-png.png)

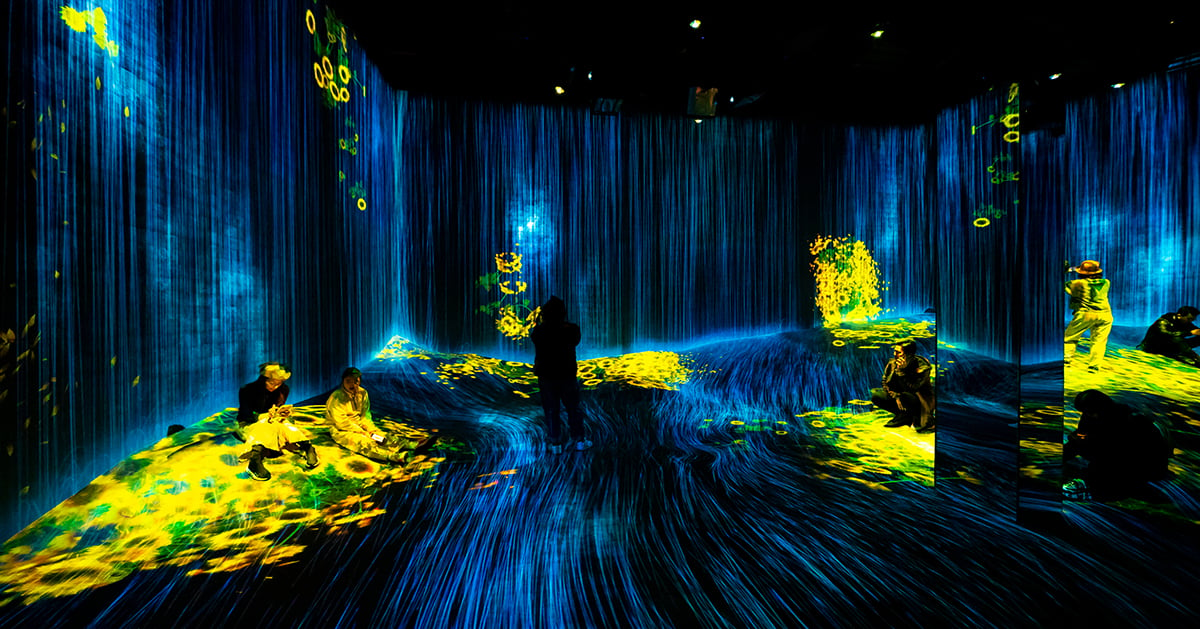

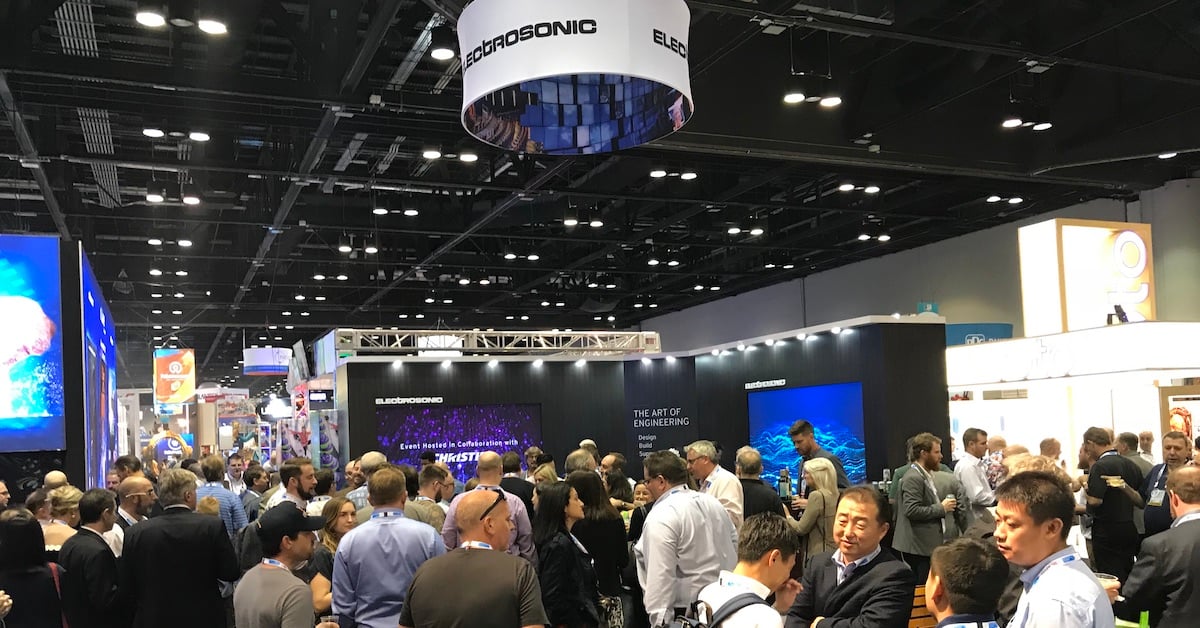

Visual AI Is One More Way for Experiences to Leverage the Technology

AI is already a part of the AV industry. It’s playing a role in sustainability, interactive exhibits, virtual reality and creating simple, intuitive user interfaces. Visual AI adds another component that will further the experiences to captivate audiences and simplify development.

Ryan Poe

Ryan Poe, Electrosonic’s Director of Technology Solutions, works and writes on the frontiers of advanced technology. He is a trusted adviser on leveraging technology in new ways and works within our Innovation Garage framework to evaluate new technologies and develop resources that support a portfolio of advanced services.

.jpg?width=1500&height=995&name=ELC501_N17_medium%20(1).jpg)

![[Learn how to create award-winning technology experiences]](https://no-cache.hubspot.com/cta/default/5104351/14f3f823-a112-4552-959b-70dd9746d228.png)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

-(1)_1200x629px.jpg)

.jpg)

.jpg)

-RR.jpg)

.png)

.jpg)

.png)

%20(1)-es.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.png)

.png)

.jpg)

.png)

.png)

.jpg)

.png)

.jpg)

.jpg)